Mathematician Rachel Ward Sees the Big Picture

Every year, Americans undergo about 30 million MRIs. Though MRIs are painless and noninvasive, waiting through the procedure is anything but pleasant. While the gold standard of medical diagnostics, they can cause feelings of intense claustrophobia, and patients are often eager to minimize the length of the procedure.

Indeed, researchers have long explored ways to make imaging processing tools like MRIs more efficient. An MRI takes as long as it does because technicians must snap a certain number of images — from a certain number of angles — in order to reach an informed decision. Too few pictures, and they might miss a tumor. But too many, and the patient could be stuck inside the MRI for hours.

In 2013, mathematician Rachel Ward and her colleague Deanna Needell discovered an applied mathematics solution that serves as the basis for choosing which angles will be most informative in a short amount of time. Their results ended up winning them the prestigious Institute for Mathematics and its Applications Prize, which “recognizes an individual who has made a transformative impact on the mathematical sciences and their applications.”

As Needell recalls, “That was a really tough problem; a lot of people tried proving a result there and didn’t make it.” The key breakthrough, Ward remembers, came after she had been “obsessively looking for the right result to apply for many months” from the related field of approximation theory and applied it to the image processing problem in MRIs. Applying a novel insight from an unusual standpoint is Ward’s signature mathematical move, which she has pulled time and again in many subfields.

“Something that is super amazing about Rachel is that she makes very substantial contributions to very different problems,” says her former student Soledad Villar, now a professor at Johns Hopkins University. “She doesn’t have one specific thing that she does that she’s an expert in; she does many things and brings new ideas to different areas.”

Importing unexpected results from various fields and seeing the big picture have played a significant role in getting Ward to where she is today. In 2022, she was invited to speak at the International Congress of Mathematicians, the largest mathematics conference in the world, which occurs every four years. She became a Simons Foundation Fellow in 2020, won a von Neumann fellowship in 2019 at the Institute for Advanced Study, and received the Sloan Fellowship in 2012. She is now the W.A. “Tex” Moncrief Distinguished Professor in Computational Engineering and Sciences — Data Science and Professor of Mathematics at the University of Texas at Austin.

“I enjoy identifying emerging problems and translating between different modalities of thinking,” says Ward. “Scientists from various engineering or computational sciences bring my attention to compelling questions, like why is this algorithm working so well, or why is there a discrepancy between what current theory says and what we’re seeing in practice? I like the process of distilling simple but meaningful math questions from the engineering problems.”

Learning the art of mathematics

Despite being the daughter of a math professor and a computer programmer at Texas A&M University, Ward didn’t think she’d end up in math. In fact, she wryly recalls that an old teacher returned a note she had written in kindergarten that said “I hate math” after she had declared her math major at UT Austin. She had switched from her original field of study, biochemistry, after becoming frustrated with the meticulous lab work required of the major.

“The more abstract math classes, like real analysis and linear algebra, really made a lot more sense to me — I thought: this is not stressful; this is fun,” Ward says. She followed that “fun” with two summer research experiences. “I hadn’t realized until these research experiences that math is really an art. There’s an art to asking questions and connecting different topics with applications.”

After graduating, Ward chose computational math for graduate school and headed to Princeton University to work with Ingrid Daubechies, a star in the world of image compression and data processing. At one point, Daubechies handed her a paper about the new area of compressed sensing, a blend of Ward’s favorite topics of probability, convex optimization and analysis. After a summer reading and a few years experimenting with different methods, Ward gave what Daubechies remembers as “a beautiful insight” about the new field.

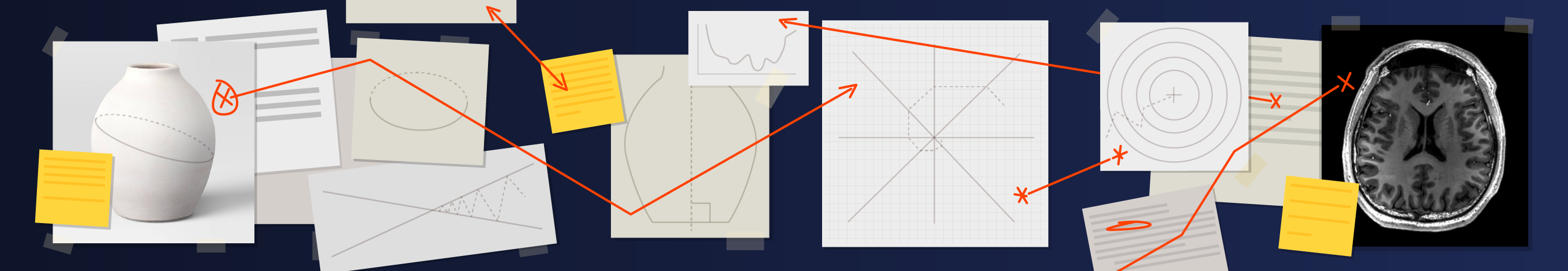

Suppose you want to take a picture of, and then reconstruct, a simple drawing. Rather than record every pixel of the image — marking each as “black” or “white” (which would take up hundreds of thousands of bits of memory) — you could save just the comparatively few locations where colors change in order to reconstruct the drawing. This idea is the basis of much of image processing. In the paper that Daubechies handed Ward, authors Emmanuel Candès, Justin Romberg and Terence Tao sketched out compressed sensing, a new algorithm in which one only needs to save a few of those changes.

Ward’s insight recognized a straightforward way to validate and measure the error of this algorithm. She connected compressed sensing with a theorem from the 1980s called the Johnson-Lindenstrauss lemma, which has since proved to have massive implications in machine learning and computing.

Imagine cutting a vase into a cross section. Depending on the angle of your cut, your section will resemble a circle, an oval or a possibly skewed vase outline. The Johnson-Lindenstrauss lemma tells us that under certain circumstances, if you take enough of these cross-section images and organize them appropriately, you can fully rebuild the vase. In other words, if you take enough images from a lower dimension (in this case, two-dimensional photos), you can reconstruct an object in a higher dimension (here, a three-dimensional vase).

To validate this notion, Ward requisitioned a few of the measurements from compressed sensing, applied Johnson-Lindenstrauss to them, and used that result to define sharp bounds on the error of the compressed sensing algorithm.

“It was such a beautiful but also simple idea that if she breathed a word of it to anyone else who worked in the area, they would immediately see the full scope of it as well as its consequences and start working on it — and I wanted her getting the credit,” Daubechies recalls.

The field of compressed sensing was exploding just as Ward published her results in 2009. Now used in applications ranging from facial recognition to astronomy, compressed sensing was just the first of many wide-ranging methodologies that Ward would validate with her broad insights.

Developing a broad view

Her next big splash occurred during her postdoc at the Courant Institute at New York University, where she continued her work on a beautiful and unexpected connection between the Johnson-Lindenstrauss lemma (the bedrock of a field called random dimension reduction) and compressed sensing, two fields that until then were considered totally separate. She worked alongside Felix Krahmer, now a professor at the Technical University of Munich.

“In general, she has a very good intuition and feeling for things; she had this feeling that there should be some connection,” Krahmer says, referring to the Johnson-Lindenstrauss lemma and compressed sensing. “One direction had been posted, but the other way is less straightforward. She had a feeling that there should be something doable, and indeed, the things worked out very closely to that.”

In 2011, Ward and Krahmer re-examined the conditions for the Johnson-Lindenstrauss lemma and optimized the algorithm by carefully and meticulously introducing “randomness.” That is, they homed in on minimal conditions and wrote an expression limiting the lower dimension required to reconstruct the higher-dimensional object (in the example above, two was the lower dimension to reconstruct a three-dimensional object). Since then, hundreds of researchers in fields ranging from random dimension reduction and compressed sensing to fingerprint matching and MRIs have built off of what Krahmer says is “still [his] most cited paper.”

That paper was enough to garner Ward several job offers after only the second year of her three-year postdoc position. She chose to return to UT Austin for her tenure-track job, where she has remained since 2011 except for a few stints in industry.

“People that have a much broader view do a lot better, and I think Rachel is definitely one of those people,” Needell says. “She has a lot of expertise in a very wide range of math. She brings a set of tools that maybe other people working on problems in the area don’t necessarily have quick access to.”

Ward prioritizes working jointly with others, bringing her eclectic expertise to a variety of topics.

“That idea of a mathematician working by themselves is a stereotype that’s becoming less relevant as we move towards a more global, interconnected, interdisciplinary research community,” Ward explains. “There’s more and more important work being done in groups and collaboratively.”

Always building new connections

Ward’s focus on collaboration has served her well in her work, and she keeps finding herself at the forefront of “hot” areas. In 2014, she published her most-cited paper yet, jointly with Needell and Nati Srebro of the University of Chicago, connecting a field called stochastic gradient descent with another field called the randomized Kaczmarz algorithm. Begun in the 1930s, the Kaczmarz algorithm aims to solve systems of equations; it was updated with randomness in 2009. Since her publication, nearly 600 papers in both fields have cited the research, which proved that the randomized Kaczmarz algorithm is a special case of stochastic gradient descent.

Used in machine learning, neural networks, AI, and other areas, stochastic gradient descent is an algorithm for optimization. If you picture a function as a hill, then the gradient at any given point is the slope of the hill. To head for the top of the hill, you stand at that point and face the gradient, then take a step in that direction. Next you find the gradient from your new location, take a step in that direction, and continue this stochastic gradient descent algorithm until the hill flattens out.

For years, applied researchers relied on stochastic gradient descent to optimize various functions, but it didn’t always work because it relies on step size — with a particularly bumpy hill, you may inefficiently move down before heading up, or never reach the summit. Next came an algorithm that changed step sizes on the fly based on previously observed gradients, a new flavor called adaptive gradient descent — now widely used as an industry standard. Unfortunately, adaptive gradient descent was only guaranteed to work for functions under particular conditions.

Ward is most excited about her recent work on adaptive gradient descent. In 2020, she and researchers from Microsoft and Facebook Research (including one of her former students) did away with those conditions, proving that adaptive gradient descent finds success even with wonky-looking hills. Like she did with compressed sensing and Johnson-Lindenstrauss, Ward illuminated the math behind the methodology, proving that adaptive gradient descent actually works with broader conditions than previously believed.

“What I like most is finding a new connection between the way I’ve been thinking about a problem and the way other people have been thinking,” Ward says. “By collaborating with lots of different people, your worldview keeps enlarging and you continue to be humbled and challenged.”