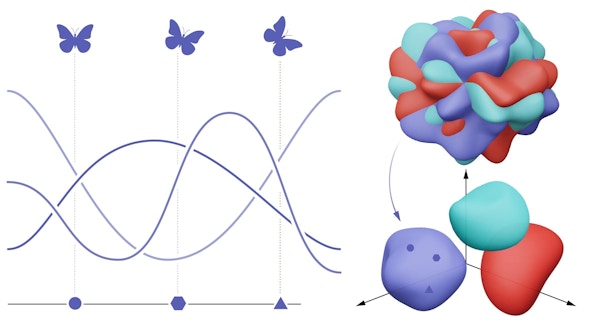

Our group develops mathematical theories for understanding how neurons collectively give rise to behavior in biological and artificial neural networks. Our current focus is on addressing this question through two broad approaches at the intersection of computational neuroscience and deep learning:

(1) analyzing geometries underlying neural or feature representations, embedding and transferring information, and (2) building neural network models and learning rules guided by neuroscience. To do this, we combine computational tools from theoretical physics, applied math, and machine learning. Alongside this theoretical work, we develop close collaborations with experimentalists to be inspired by and to test ideas on neural data.

NeuroAI & Geometric Data Analysis Lab