The Pleasure (and Necessity) of Finding Things Out

Richard Feynman’s journey toward a Nobel Prize started with a deep desire to avoid doing anything important. The not-yet-famous physicist, fresh from the success of the Manhattan Project, had turned down a prestigious offer to join the Institute for Advanced Study in Princeton, N.J. Instead, he sat in a cafeteria at Cornell University, idly doodling equations to describe the wobbling of a plate tossed into the air by a student. That day, Feynman’s colleague (and former Manhattan Project collaborator) Robert Wilson had encouraged Feynman to explore any scientific idea that interested him, regardless of how useful it appeared to be. This challenge emboldened Feynman to model the spin of an electron in a similar way to the wobbling plate, which then inspired his further investigations into quantum electrodynamics. As Feynman recalled in a 1981 BBC documentary, “It was like a cork out of a bottle — everything just poured out. In short order I worked out the things for which I won a Nobel Prize.”

That film, “The Pleasure of Finding Things Out,” captures the essence and beauty of discovery-driven, or “basic,” research — but also its paradoxes. The scientific discovery process, fueled by creativity and intellectual freedom, produces so many of our society’s technical and humanitarian achievements, while also undergirding the United States’ economic prosperity and national security. Nevertheless, this same discovery process can all too easily be seen as a luxury when our nation is under stress or threat. Why “waste” taxpayer dollars on indulging scientists’ idle curiosity when there are jobs to be created, resources to secure, and wars to be won?

The trouble with that analysis is that work that seems idle one day can become crucial the next — and until scientists discover a working crystal ball, society will never be able to make the distinction in advance. Discovery-driven research is the best investment we can make in the face of the multiplying complexity and uncertainty that increasingly defines our new century. It is, literally, an investment in ourselves at the most basic level — not merely a hedge against “unknown unknowns,” but a commitment to embracing them. In this country we value makers and risk takers: craftspeople, entrepreneurs and innovators who explore frontiers both intellectual and physical, whether it’s Lewis and Clark mapping a route across the western half of the North American continent, Charles and Ray Eames inventing a cheap and repeatable process to mold plywood into compound curves, or Steve Jobs daring to turn “dumb” cellphones into handheld computers. Scientists embody these values of risking and making as well — the very definition of “basic research” means investigating unexplained phenomena with unproven hypotheses and uncertain methods. It’s no accident that Vannevar Bush titled his 1945 report to President Roosevelt on the essential value of basic research “Science: The Endless Frontier.”

But then, as now, we must remind ourselves that the inexorable technological advances we expect in everything from our cell phones to our military forces are derived from an equally inexorable urge to wonder why photons, dark matter and everything in between behave the way they do. We must bear in mind that lifesaving vaccines and treatments require decades of wrestling with the most basic puzzles of biochemistry, and that the seeking of knowledge for its own sake is not a privilege that we earned by achieving economic dominance, but a value that allowed us to achieve that position in the first place.

Knowledge Is Power

The futurist and science-fiction writer Arthur C. Clarke famously remarked that “any sufficiently advanced technology is indistinguishable from magic.” Today we live in a world filled with technological magic, but we take most of it for granted, never realizing that the man behind the curtain is basic scientific research.

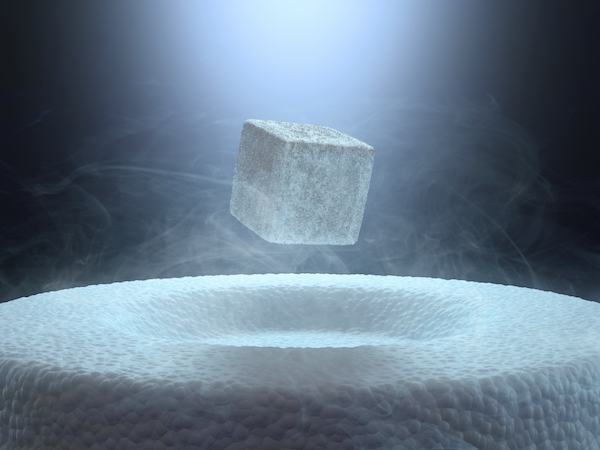

Modern electronics, including lasers and transistors, are the most frequently cited examples of the magic that emerged as a consequence of basic discoveries about quantum mechanics in the early 20th century. But other less obvious but equally world-changing applications have come from fundamental discoveries in physics. Consider, for example, superconductivity, in which electrical resistance disappears in materials cooled below certain critical temperatures, and nuclear magnetic resonance, in which atomic nuclei in a magnetic field absorb and re-emit electromagnetic radiation. Both phenomena were independently discovered in the first half of the 20th century, and both resulted in Nobel prizes. But these basic discoveries also played critical roles in the development of clinical MRI (magnetic resonance imaging) machines that help doctors safely and accurately diagnose brain tumors and heart disease. This medical procedure, which creates detailed images of internal anatomy without resorting to dangerous radiation, once seemed magical but has now become utterly commonplace — more than 30 million MRIs are performed annually in the U.S., saving countless lives in the process.

Fundamental biological discoveries have also generated unforeseen insights or applications. The discovery of telomeres — the protective biomolecular “end caps” on DNA chromosomes — by Elizabeth Blackburn in 1978, which earned her a Nobel Prize, eventually led researchers to a deeper understanding of human aging and cancer two decades later, despite the fact that Blackburn found the telomeres while studying a species of protozoan that creates “pond scum.”

Even purely abstract findings in number theory — a field once praised by the mathematician Leonard Dickson for being “unsullied by any application” — have been put to work for us, most notably in the cryptographic mechanisms that protect our bank and credit card numbers when we shop on the Internet.

According to the biologist Jack Szostak, who shared the 2009 Nobel Prize in Physiology or Medicine with Blackburn (and Carol W. Greider), this is the power of basic research: “You don’t know where things are going to go in science.”

The Federal Funding Paradox

Still, despite its proven value and concrete results, the pursuit of scientific knowledge for its own sake — or “for the fun of it,” to use Feynman’s unvarnished phrase — can easily be made to appear trivial or expendable in the face of other obligations.

This fundamental paradox can be seen in the federal government’s own balance sheet. The America COMPETES Acts of 2007 and 2010 intended to double the 2006-level budgets of the National Science Foundation (NSF), the National Institute of Standards and Technology (NIST), and the Department of Energy Office of Science (DOE Science) by 2017, with an aim to “invest in innovation through research and development, and to improve the competitiveness of the United States.” Yet according to an analysis by the Center for American Progress (CAP), as of 2014 these agencies remain $6 billion short of that goal and stand to face another $13.6 billion in shortfalls over the next seven years. The CAP report concludes that this shortfall would translate to a loss of “nearly 14,000 NSF research grants, 75 new NIST research institutes … and 24,000 person-years … of DOE Science research.”

Often the first argument made for the importance of fundamental research — and it’s a compelling one — is that it represents a means to the even more important ends of new applications, technologies and industries. NASA publishes an annual report called “Spinoff,” which enumerates the thousands of commercialized applications that have resulted from its space science activities. Still, no one would suggest that we sent human beings into space in order to derive useful inventions like memory foam and enriched baby formula. Yet this is the logic often deployed to rally public support for basic research, potentially drowning out the message that discovery is important in its own right.

Discovery and Application: Not So Different

Another way to reinvigorate the perceived value of basic research is to dissolve the distinction that has traditionally been made between discovery and application: that basic researchers may get prizes, but applied scientists get patents. But the contrast between finding things out and putting things to work is not necessarily productive, explains Paul Alivisatos, director of the Kavli Energy NanoSciences Institute (ENSI) at the University of California, Berkeley. Researchers at ENSI are studying the dynamics of nanoscale biological motors, such as the protein-strand “tails” that bacteria use to propel themselves through their environment. These tiny engines constantly fluctuate between forward and reverse motion — something no human-engineered motor would ever do. So what’s the point of knowing anything about them ? “It turns out that nature is trying to solve the problem of wasting as little energy as possible by using some of these natural fluctuations,” Alivisatos explains. “There may be lessons for us in how we can make energy conversion systems that emerge on a very small length scale.” When asked if his own materials-science research should be considered “basic” or “applied,” he quips, “Do I have to choose?”

Robert Tjian, a biochemist and president of the Howard Hughes Medical Institute, also tries to break down the distinction between basic research and its translation into practical results. Tjian discovered the existence of “activator” proteins that regulate gene expression in microorganisms, and he continues to study these mechanisms in more complex life forms. He is also a co-founder of Tularik, a biotechnology company specializing in drug discovery via gene expression. “If somebody comes up to me and asks me am I a basic researcher or a translational researcher, I say that I’m both,” he says.

How Basic Science Thrives

While discovery and application are often co-generative in the sciences, conducting basic research requires unique support mechanisms that go beyond funding. One essential ingredient is a tolerance for risk and resilience in the face of setbacks. These are qualities that we can easily ascribe to artists and entrepreneurs, and to the organizations that support and employ them. But if we consider that basic science is, like art or invention, a fundamentally creative and exploratory process rather than a straight line from ignorance to knowledge, it becomes clear that discovery-driven researchers — and the organizations that support and employ them — need to nurture and cultivate the same kind of ethic.

Instead, the personal, cultural and financial disincentives for risk taking in science today are legion. Government grants are often short-term and results-oriented, and the “wrong” kind of public attention (as Patricia Brennan’s NSF-funded research on duck genitalia endured in 2013) can have a chilling effect. Paths to securing employment in academia are scarce and often politically fraught. Negative experimental results, while undeniably useful to the advancement of scientific knowledge, are devalued in the publication landscape, which also affects employment prospects. Unlike in Silicon Valley, “failure” is not embraced as a generative outcome of experimentation, nor is there a breathless press corps publicly dramatizing the day-to-day scientific process, which — unlike the boom-and-bust cycles of venture capitalists and startup CEOs — tends to be necessarily slow, rigorous and opaque to outsiders.

“There’s a lot of fear associated with risk because if you take risks, you’re going to make some mistakes,” agrees Paul Steinhardt, a theoretical physicist and the Albert Einstein Professor in Science at Princeton University. Still, Steinhardt started taking risks early in his career, when he decided not to specialize in the subject of his dissertation, a subset of two-dimensional quantum field theory. Today his discoveries span the disciplines of cosmology, crystallography and photonics. “I’m looking for a good puzzle, and if I can solve it, I don’t care what subject it is,” he says. He admits that he worried about his career prospects, but in the end, “I just said, ‘The heck with it.’ You’re going to suffer a certain amount of embarrassment on the learning curve. On the other hand, it’s so stimulating intellectually that it’s more than worth the negatives.”

Robert Tjian compares the drive to do basic research to athletic talent, which also demands personal risk taking and perseverance in order to flourish. Much as an athlete like Roger Federer or Michael Jordan had to practice endlessly in order to break new ground in performance or technique, the talent of a successful basic researcher lies not in his or her ability to consistently generate perfectly formed, media-ready “breakthroughs,” but rather, Tjian says, in an innate ability “to take the 85 percent of your experiments that screw up and say, ‘Hey, wait a minute, it’s not just a screwup, it’s telling me something.’”

Yet possessing this indefatigable drive for discovery, while necessary, may not be sufficient. Basic researchers often need strong mentors to ignite their talent. Steinhardt’s role model was Richard Feynman himself. When Steinhardt helped organize Feynman’s famous “Physics X” seminars at the California Institute of Technology in 1973 and 1974, which gave students from any discipline the opportunity to ask Feynman any questions they wanted, Steinhardt witnessed firsthand the value of wide-open curiosity in scientific inquiry. “It strongly influenced me,” Steinhardt recalls. “Feynman wasn’t sending us the message that we should all be doing high-energy physics like he was. He was saying that all phenomena are interesting, and you should be curious about them.”

Finally, time — potentially on the scale of decades rather than years — is also a prerequisite for supporting the kind of frontier-pushing research that drives science and society forward. As an example, Jack Szostak cites his own research on telomeres, which took decades to draw the attention of the Nobel committee. “It wasn’t clear that there were going to be any huge applications or even huge implications of the work,” he says. Szostak’s latest research endeavor, the Simons Collaboration on the Origins of Life, will devote at least eight years — twice the typical length of a federal grant — to supporting “creative, innovative research on topics including the astrophysical and planetary context of the origins of life, the development of prebiotic chemistry, the assembly of the first cells, the advent of Darwinian evolution, and the earliest signs of life on the young Earth.”

While unraveling what Szostak calls “one of the most fundamental mysteries in all of science” will almost certainly take longer than a decade, discoveries made along the way will justify the investment. “You have a ‘big question’ like the origin of life, but it’s not just one question,” Szostak says. “It breaks down to a whole bunch of sub-questions, and we want to see those being answered.” In other words, the image of basic researchers idly toying with unanswerable puzzles is a misleading one. Even the “purest” of theoreticians — like the Fields Medal-winning number theorist Manjul Bhargava, who proudly compares his mathematical inquiries to artistic projects — hunt the same quarry as all scientists and mathematicians do: robust results and airtight proofs, generated at an encouraging pace.

What Strong Support for Discovery-Driven Science Looks Like

While private funders might be better equipped to underwrite high-risk fundamental research, their involvement simply cannot close the $6 billion gap left by the federal government. What’s needed to truly bolster basic science is a vigorous and diverse contribution from government, philanthropic and corporate sources so that basic research on any scale, from one-year individual projects to multidisciplinary longitudinal studies spanning decades, has a viable pathway to support.

Some prominent basic researchers, like Paul Steinhardt and the physicist and former NSF director Neal F. Lane, argue that government support for discovery-driven science should be managed like an investment portfolio, with a diversity of risk and timelines for potential returns. And those returns could be more substantial than those boasted by any hedge fund: According to a report by the research firm Battelle Technology Partnership Practice, the $3.8 billion in federal support for the Human Genome Project generated $796 billion in economic growth and job creation between 1988 and 2010, or $141 in return for every dollar invested.

Others, like Robert Tjian, suggest that sports-style “talent scouting” for the next generation of basic-science investigators must become a national priority. “I have high school students that work in my lab in Berkeley,” he says. “The sooner you get them, the better.” The much-heralded STEM (science, technology, engineering, mathematics) curriculum initiative has its head in the right place, but the more nascent STEM movement, which adds art (and design) to the acronym, may have an even greater potential to inspire young people while sidestepping the Cold War-style anxiety that often bubbles beneath the surface of STEM support. John Maeda, an artist-scientist who attended the Massachusetts Institute of Technology and also served as president of the prestigious Rhode Island School of Design, advocates heavily for a STEM orientation in primary education in order to cultivate a new generation of creative leaders who can connect the power of scientific inquiry and technical implementation with the universal values of the humanities.

What’s Old Is New Again

This forward-looking approach to fostering scientific advances actually has deep roots. From the “natural philosophers” of the 18th and 19th centuries, to Bell Labs and Xerox PARC in the mid-20th century, to Janelia Farm and the MIT Media Lab in the 21st, individuals and organizations that equally value aggressive innovation and open-ended creativity have produced the steadiest stream of scientific breakthroughs. Indeed, the most compelling reason to vigorously support basic science is arguably the same one that motivated Richard Feynman to jot down equations in that Cornell cafeteria: because “the pleasure of finding things out” is built into human nature. It is something we are all born with. Basic science harnesses this intrinsic human urge and focuses it into one of the most potent means of understanding and changing the world that we have ever wielded. The name that scientists have given our species — Homo sapiens, “wise man” — honors this fact. Many animals are curious, and all children wonder “why.” But to actually find out — and to draw not just meaning and wisdom but joy from that never-ending project — this is much of what makes us human. As Robert Tjian puts it: “The real reward is just actually discovering something, and knowing that for a short period of time you’re the only person in the universe that knows it.”

But even those triumphant moments do not fully capture the value that basic research embodies. After all, to truly discover something new requires that we unblinkingly regard our own ignorance, not as a liability or an enemy to conquer, but as an opportunity and perhaps a responsibility. Feynman acknowledged as much when he addressed the National Academy of Sciences in 1955 in a lecture called, simply, “The Value of Science” (pdf). An intrinsic part of that value, he asserted, was “ignorance and doubt and uncertainty. … Our freedom to doubt was born out of a struggle against authority in the early days of science. It was a very deep and strong struggle: permit us to question — to doubt — to not be sure. I think that it is important that we do not forget this struggle and thus perhaps lose what we have gained.”

Otherwise put, “the pleasure of finding things out” may be an exuberant process, but it must be bookended on either side by an immutable respect for the unknown, and for our own perpetually small position within the vastness of the universe. “It is our responsibility as scientists,” Feynman said, “to teach how doubt is not to be feared but welcomed and discussed; and to demand this freedom as our duty to all coming generations.”

Discovery-driven science continually shows us, paradoxically, how much we still do not understand. That is its value, and why it matters: because Homo sapiens is an aspirational label, not a foregone conclusion.