Finding Prime Locations: The Continuing Challenge to Prove the Riemann Hypothesis

Prime numbers are the ‘atoms’ of the integers, and as such they are a central object of study in the field of number theory. One key puzzle facing number theorists is the distribution of primes: There is no known way, other than brute-force computation, to predict exactly where the nth prime number will be or what the distance between two consecutive large primes will be.

Prime numbers are the ‘atoms’ of the integers, and as such they are a central object of study in the field of number theory. One key puzzle facing number theorists is the distribution of primes: There is no known way, other than brute-force computation, to predict exactly where the nth prime number will be or what the distance between two consecutive large primes will be.

Considered by many to be the most important unsolved problem in mathematics, the Riemann hypothesis makes precise predictions about the distribution of prime numbers. As the name suggests, it is for now only a conjecture. If proved, it would immediately solve many other open problems in number theory and refine our understanding of the behavior of prime numbers.

The Riemann hypothesis builds on the prime number theorem, conjectured by Carl Friedrich Gauss in the 1790s and proved in the 1890s by Jacques Hadamard and, independently, by Charles-Jean de La Vallée Poussin. Roughly speaking, the prime number theorem states that the number of primes less than n is proportional to n divided by the number of digits in n. A greater proportion of two-digit numbers are prime than three-digit numbers, which are themselves more likely to be prime than four-digit numbers, and so on; the prime number theorem quantifies that decreasing relationship.

The Riemann hypothesis, formulated by Bernhard Riemann in an 1859 paper, is in some sense a strengthening of the prime number theorem. Whereas the prime number theorem gives an estimate of the number of primes below n for any n, the Riemann hypothesis bounds the error in that estimate: At worst, it grows like √n log n.

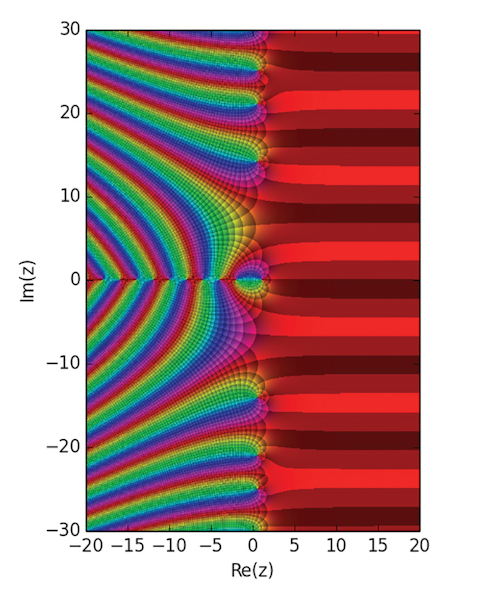

The Riemann zeta function is defined on the complex plane, the set of all numbers of the form s = a + bi, where a and b are real numbers and i = √-1. (Though mathematicians usually use the letter z to represent a complex variable, they defer to Riemann and use the variable s in the zeta function.) When a > 1, the zeta function is defined this way:

$$

\zeta(s) = \frac{1}{1^s} + \frac{1}{2^s} + \frac{1}{3^s} {…}

$$

or in shorthand, like this:

$$

\zeta(s) = \sum_{n=1}^\infty \frac{1}{n^s}

$$

For example, if s = 2, then the zeta function is written as:

$$

\zeta(2) = \sum_{n=1}^\infty\frac{1}{n^2} = \frac{1}{1^2} + \frac{1}{2^2} + \frac{1}{3^2} {…}

$$

This series has a finite sum of π2/6 (which Leonhard Euler showed in the 1700s).

When the value of a is less than or equal to 1, the infinite series above does not yield a well-defined, finite value. For example, if a = 1 and b = 0, then s = 1, and ζ(1) will be the series 1 + 1/2 + 1/3 + 1/4 + … , which is infinite. The same is true for s = 0 (the sum will be 1 + 1 + 1 + … ) and s = −1 (where it will be 1 + 2 + 3 + 4 … ). So if that were the only way to define ζ(s), the function could only be defined for points on the complex plane of the form a + bi, where a > 1.

But mathematicians have a trick up their sleeves: analytic continuation. In the case of the zeta function, it allows mathematicians to extend the function to complex numbers a + bi where a is not greater than 1. If mathematicians add the constraint that the extended function must connect smoothly to the original function, there is a unique way to do it, so mathematicians refer to the analytic continuation of a function.

Hence, the Riemann zeta function is defined as 1/1s + 1/2s + 1/3s + … when that series has a well-defined, finite value (which happens to be when s is a complex number of the form a + bi and a is greater than 1); otherwise, ζ(s) is the value of the analytic continuation of the function defined by that series at s. (There is no way to extend the zeta function to the entire complex plane; it is not defined at a single point, s = 1.)

Defining the Riemann zeta function via analytic continuation is important because it enables mathematicians to use techniques from a field called complex analysis, which deals with continuous functions on the complex plane, to draw conclusions about the infinite sums that motivated the definition of the function in the first place.

The Riemann hypothesis concerns the values of s such that ζ(s) = 0. In particular, it says that if ζ(s) = 0, then either s is a negative even integer or s = 1/2 + bi for some real number b. The negative even integers are called the ‘trivial’ zeros of the zeta function because there are some relatively simple mathematical arguments that show that these values of s will always yield zeros.

The other zeros are the mysteries. Mathematicians know they all lie on the so-called ‘critical strip’ of numbers a + bi where a is between 0 and 1; the conjecture is that a is always 1/2. Computers have found 1013 of these nontrivial zeros; every last one has the form 1/2 + bi. But all of these examples get us no closer to a proof that every s must have that form. The relationship Riemann discovered between the zeta function and the prime number theorem involves the precise locations of the zeros of the zeta function. If their real parts are all equal to 1/2, that relationship can be translated into the statement that the errors in the estimates given by the prime number theorem are at most proportional to √n log n.

A solution to the Riemann hypothesis — and to newer, related hypotheses that fall under the umbrella of the ‘generalized Riemann hypothesis’ — would prove hundreds of other theorems. In one fell swoop, it would establish that certain algorithms will run in a relatively short amount of time (known as polynomial time) and would explain the distribution of small gaps between prime numbers. In short, a solution would solidify humanity’s understanding of some of the most fundamental objects and relationships in mathematics.