Hippocampal Replay: Reflection on the Past or Planning for the Future?

Like most children, my kids love to play hide-and-seek. When they were very young, however, the game wasn’t much fun for me, mostly because they repeatedly hid in the same spots — under the couch or behind a door. As I considered their location — Are they behind the door again? — did the neural activity whizzing away in my brain correspond to my plan to check behind the door in the future or to my memory of checking behind the door last time?

Neuroscientists studying the hippocampus, a brain structure crucial for spatial navigation and memory, have been pondering that very question for the last 15 years. New research published in Neuron in October by Anna Gillespie, a postdoctoral fellow with the Simons Collaboration on the Global Brain (SCGB), and Loren Frank, a neuroscientist at the University of California, San Francisco and an SCGB investigator, provides solid support for the memory side of the debate.

Hippocampal neurons become active when an animal navigates through a particular location in space. But they also fire during periods of immobility, when cells corresponding to other locations in space become active. Researchers believe that these activity patterns — termed ‘replay events’ — represent an animal ‘thinking’ about locations in space other than where it is. But there is substantial disagreement and speculation about the purpose of these events. Do replay events represent memories of the past or plans for the future?

To address this question, Gillespie developed a maze that allowed her to unequivocally determine whether replay events encode past or immediate future actions. Gillespie found little evidence suggesting that replay events reliably correspond to the animal’s immediate upcoming movement plan. Rather, these events more often correspond to locations the animal visited — and received a reward for visiting — in the recent past, supporting the view that hippocampal replay is critical for consolidating important memories of recent events rather than planning for immediate future movements.

“We’re not seeing any evidence that is contributing to future behavior on the immediate trial-by-trial timescale in these tasks,” says Frank. However, this does not mean that replay has no role in planning whatsoever. Rather, it suggests that replay events help update a ‘map’ of important behaviors and their outcomes, which could be used later by another part of the brain to plan and execute a decision, Frank says.

Replaying the hits

The hippocampus has long fascinated neuroscientists because of the rich mixture of behavioral functions it appears to house. Its most well-known function is to consolidate memories. Humans and research animals whose hippocampus has been removed or damaged are impaired in their ability to form memories of their recent experiences. Another central role is to guide navigation. Nobel Prize-winning work in the 1970s identified cells in the hippocampus — since termed place cells — that become active when an animal moves through a particular location in space. Strung together in a sequence, these cells can provide a high-fidelity map of an animal’s trajectory through an environment.

Although preceded by suggestive findings, research published in Science in 1994 by neuroscientists Matt Wilson and Bruce McNaughton introduced the tantalizing prospect of uniting these two functions within the hippocampus. Wilson discovered that pairs of cells that responded to adjacent locations in space while an animal navigated were also activated while the animal slept following navigation. Two follow-up studies by McNaughton and Wilson respectively found that the relative timing of the responses during sleep mimicked the timing seen during navigation and that strings of co-reactivations formed sequences during sleep.

This sequential reactivation of place cell responses during sleep was dubbed ‘replay’ and provided a scaffolding for theories of memory consolidation in the hippocampus. Sequences of wakeful place cell activity encoded the animal’s actions, and these events were replayed in the same order during sleep, hypothetically consolidating memories by strengthening the most active synapses and weakening inactive ones. Because synapses are strengthened or weakened depending on the amount of recurring neural activity, replaying important activity sequences is a clever mechanism for reinforcing them.

In 2006, the narrative took an unexpected turn. Research by Wilson, now at the Massachusetts Institute of Technology, and his postdoctoral trainee Dave Foster found that replay events were not restricted to sleep but could occur just moments after navigation, while the animal was still awake. Enriching the tale further, they found that the order in which most ‘awake replay’ events occurred was the opposite of the order in which they occurred during navigation, earning them the name ‘reverse replay’ events. Coupled with a dopamine response from other brain regions, “this could be a great way to assign a reward weight to distant locations,” says Frank, consistent with a “memory storage or updating role” for awake replay events. When played in reverse order, recently occupied locations where a reward was received could be paired with a recent reward signal, while distant locations could be paired with the decaying residual of that signal occurring later in time, possibly providing a mechanism to slowly build up associations between reward locations and the path the animal took to get there. These findings were especially exciting because they were strikingly similar to theories of memory in the hippocampus introduced in 1989 by the New York University neuroscientist György Buzsáki.

A year after Wilson’s findings, Buzsáki, then at Rutgers University, confirmed the existence of awake reverse replay events and also identified traditional ‘forward’ replay events during wakefulness. This second discovery sparked speculation over the role replay might have beyond memory consolidation. Buzsáki found that reverse replay events occurred more frequently at the end of navigation, which could support memory consolidation. But forward replay events occurred just prior to navigation, and he speculated that they might be useful for planning future actions. Following this result, “the field expanded, quite a lot,” recalls Buzsáki, with researchers digging deeper into different replay events and how they potentially encode the past or the future.

Past or future?

To Gillespie and Frank, a major challenge was reconciling conflicting results in favor of memory or planning that arose from prior studies that used a wide range of behavioral tasks. Many studies that followed Buzsáki’s results were conducted in environments much like the hide-and-seek world of my children — too simple for individual locations to be unambiguously related to the past or the future. “The fact that a lot of old tasks were very repetitive was a real sticking point,” says Gillespie. “If you’re asking questions about the past and future in a repetitive task, you just don’t have the power to make those conclusions.”

On the other hand, some studies employed tasks that were very complex, which similarly complicated attempts to unequivocally relate replay events to specific past or future trajectories. Some of the strongest empirical evidence to date for replay’s role in charting planned navigational paths was published in 2013 by Brad Pfeiffer, now an investigator at the University of Texas Southwestern Medical Center. Working as a postdoc in Foster’s lab, Pfeiffer examined the content of awake replay events while rats navigated an open arena to find food rewards. The task required the rat to alternate between navigating to a random reward location and heading to a fixed ‘home’ reward location. Just before the rat navigated to the home site, Pfeiffer found replay events that more closely resembled the path toward the home site than the path the animal had taken to its current location. These findings were widely interpreted as showing that replay events were providing a neural substrate for planning of immediate future actions.

Additional support for replay’s role in planning came from a 2013 paper by Frank himself, where he found that replay events seemed to predict whether the animal’s upcoming choice would be correct or not. “Putting all of that together, it really seemed like what’s going on is that these are an instantiation of plans that could get evaluated,” says Frank.

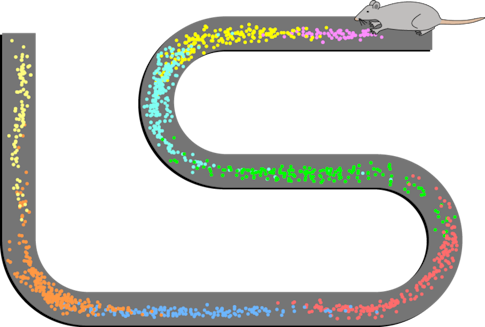

To directly address whether replay favors memory or planning, Gillespie and Frank developed a ‘Goldilocks’ task: a complex yet highly structured task that required the rat to discover predictable reward sites but also adapt when those sites changed. A sufficiently but not overly complex environment containing many options would give rise to a rich but structured behavioral experience for the animal, thought Frank, allowing the researchers to see replay events unambiguously aimed at particular locations in the maze and with a clear relationship to reward. “My bias going into this was that it was going to show that the planning hypothesis was correct, because it was so appealing to think that these were times when the animal was sampling future possibilities and evaluating them,” says Frank.

Rats had to learn and remember which one of eight arms in the maze offered a reward and continue to visit this arm to receive a reward. After a series of successive rewards, the goal arm changed and the rat needed to begin searching again. This allowed researchers to link replay events with locations the rat had just visited or was about to visit and to multiple locations where the reward had been reliably delivered in the recent past.

To their surprise, Gillespie’s results offered little to support a role for replay in planning immediate future trajectories: Replay events before navigation were unreliably related to the animal’s forthcoming movement. Instead, replay events were more likely to represent trajectories that had been reliably rewarded in the recent and slightly distant past. As the rat more reliably navigated toward a newly rewarded arm, replay events of this new reliable reward site became more common.

But before the rat reliably learned the new reward location, and even well after the new location was learned, the most common replay event reflected the route to the previous reliably rewarded arm, strongly supporting replay’s role in memory, not planning. “Replay toward the previous reward location continued for 10 to 20 trials, even though the animal was absolutely doing something else,” says Frank.

“It was really striking to us that this is what replay was representing because [the previously rewarded goal arm] is not a location that would be useful in terms of optimizing the rat’s future behavior,” Gillespie says. “You can imagine, in more of an ethological setting, storing representations of places that have historically been useful might be a good thing to do.”

What comes next?

Gillespie’s results are surprising and strongly call into question replay’s role in planning immediate future navigation. Instead, the results support a view that replay constantly ‘refreshes’ the memories of important recently visited locations so that new experiences don’t immediately overwrite them. However, this does not mean that replay has no role in upcoming behaviors.

“We think that the reason replay can be useful for memory-guided behavior is because it’s providing you with a well-stored representation of what happened in the past that can later be used,” says Gillespie. In other words, a well-maintained memory of past events will almost certainly be helpful for guiding future actions, even if the memory and the plan for the future do not involve the same neural activity.

Although Gillespie’s results strongly favor memory, further research is needed to sort through some remaining discrepancies. For example, Pfeiffer found that replay supported planning during so-called ‘error trials,’ when the rat did not immediately navigate to the reliable reward location, which diverges from Gillespie’s findings. “Even when the rat, in theory, wants to go back to the home site, if the replay goes somewhere else the rat is more likely to follow that replay,” says Pfeiffer. However, Pfeiffer acknowledges that more direct evidence is necessary. “Whether it is because replay events actually guided the rat back or because the replay event just took the quickest path back and that’s what the rat did, we can’t show causality there,” he says.

For Frank, these results can be explained in light of Gillespie’s results but require further investigation to be sure. “Maybe what replay is doing is reactivating some previous goal location, in the case of these error trials,” perhaps biasing the animal’s movements toward these old reward locations, says Frank. “But we think that the reactivation itself is not the planning process, it’s a map updating process. And to be clear, that’s our working hypothesis, but we have not demonstrated that yet.”

An alternative possibility is that replay’s function switches between memory and planning, depending on the task. “It wouldn’t surprise me if both memory and planning are possible, and, depending on the specific requirements for the rat, there might be a slight bias for one or the other,” says Pfeiffer. Buzsáki acknowledges that Gillespie’s paradigm favors past replay events, but he says that future experiments are necessary to fully understand their function. “We can’t deny that in other situations, there are other replays,” says Buzsáki, referring to work such as his own and Pfeiffer’s that doesn’t fully agree with Gillespie’s findings. “If I learned anything over the years about the hippocampus, it is this: A minor change to the experimental design can bring about enormous changes in the hippocampus. And we don’t always understand what that minor change is.”